Recursion is not intrinsically better or worse than loops - each has advantages and disadvantages, and those even depend on the programming language (and implementation).

Technically, iterative loops fit typical computer systems better at the hardware level: at the machine code level, a loop is just a test and a conditional jump, whereas recursion (implemented naively) involves pushing a stack frame, jumping, returning, and popping back from the stack. OTOH, many cases of recursion (especially those that are trivially equivalent to iterative loops) can be written so that the stack push / pop can be avoided; this is possible when the recursive function call is the last thing that happens in the function body before returning, and it's commonly known as a tail call optimization (or tail recursion optimization). A properly tail-call-optimized recursive function is mostly equivalent to an iterative loop at the machine code level.

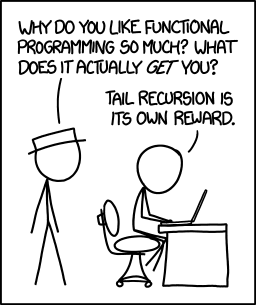

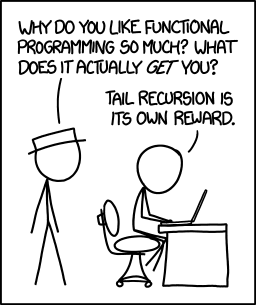

Another consideration is that iterative loops require destructive state updates, which makes them incompatible with pure (side-effect free) language semantics. This is the reason why pure languages like Haskell do not have loop constructs at all, and many other functional-programming languages either lack them completely or avoid them as much as possible.

The reason why these questions appear so much in interviews, though, is because in order to answer them, you need a thorough understanding of many vital programming concepts - variables, function calls, scope, and of course loops and recursion -, and you have to bring the mental flexibility to the table that allows you to approach a problem from two radically different angles, and move between different manifestations of the same concept.

Experience and research suggest that there is a line between people who have the ability to understand variables, pointers, and recursion, and those who don't. Almost everything else in programming, including frameworks, APIs, programming languages and their edge cases, can be acquired through studying and experience, but if you are unable to develop an intuition for these three core concepts, you are unfit to be a programmer. Translating a simple iterative loop into a recursive version is about the quickest possible way of filtering out the non-programmers - even a rather inexperienced programmer can usually do it in 15 minutes, and it's a very language-agnostic problem, so the candidate can pick a language of their choice instead of stumbling over idiosyncracies.

If you get a question like this in an interview, that's a good sign: it means the prospective employer is looking for people who can program, not people who have memorized a programming tool's manual.

Tail call optimization is present in many languages and compilers. In this situation, the compiler recognizes a function of the form:

int foo(n) {

...

return bar(n);

}

Here, the language is able to recognize that the result being returned is the result from another function and change a function call with a new stack frame into a jump.

Realize that the classic factorial method:

int factorial(n) {

if(n == 0) return 1;

if(n == 1) return 1;

return n * factorial(n - 1);

}

is not tail call optimizatable because of the inspection necessary on the return. (Example source code and compiled output)

To make this tail call optimizeable,

int _fact(int n, int acc) {

if(n == 1) return acc;

return _fact(n - 1, acc * n);

}

int factorial(int n) {

if(n == 0) return 1;

return _fact(n, 1);

}

Compiling this code with gcc -O2 -S fact.c (the -O2 is necessary to enable the optimization in the compiler, but with more optimizations of -O3 it gets hard for a human to read...)

_fact(int, int):

cmpl $1, %edi

movl %esi, %eax

je .L2

.L3:

imull %edi, %eax

subl $1, %edi

cmpl $1, %edi

jne .L3

.L2:

rep ret

(Example source code and compiled output)

One can see in segment .L3, the jne rather than a call (which does a subroutine call with a new stack frame).

Please note this was done with C. Tail call optimization in Java is hard and depends on the JVM implementation (that said, I haven't seen any that do it, because it is hard and implications of the required Java security model requiring stack frames - which is what TCO avoids) -- tail-recursion + java and tail-recursion + optimization are good tag sets to browse. You may find other JVM languages are able to optimize tail recursion better (try clojure (which requires the recur to tail call optimize), or scala).

That said,

There is a certain joy in knowing that you wrote something right - in the ideal way that it can be done.

And now, I'm going to get some scotch and put on some German electronica...

To the general question of "methods to avoid a stack overflow in a recursive algorithm"...

Another approach is to include a recursion counter. This is more for detecting infinite loops caused by situations beyond one's control (and poor coding).

The recursion counter takes the form of

int foo(arg, counter) {

if(counter > RECURSION_MAX) { return -1; }

...

return foo(arg, counter + 1);

}

Each time you make a call, you increment the counter. If the counter gets too big, you error out (in here, just a return of -1, though in other languages you may prefer to throw an exception). The idea is to prevent worse things from happening (out of memory errors) when doing a recursion that is much deeper than expected and likely an infinite loop.

In theory, you shouldn't need this. In practice, I've seen poorly written code that has hit this because of a plethora of small errors and bad coding practices (multithreaded concurrency issues where something changes something outside the method that makes another thread go into an infinite loop of recursive calls).

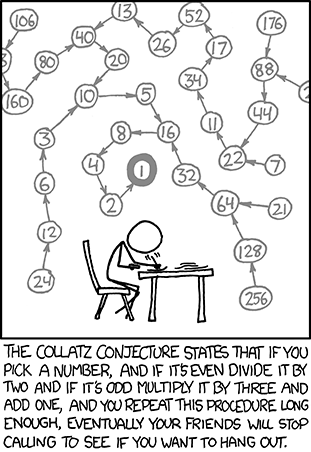

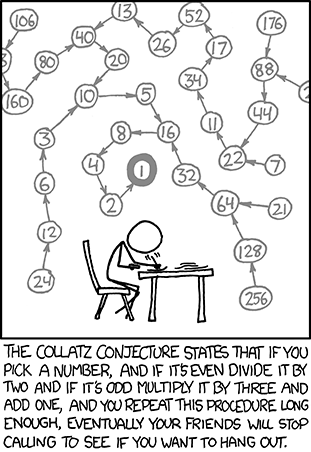

Use the right algorithm and solve the right problem. Specifically for the Collatz Conjecture, it appears that you are trying to solve it in the xkcd way:

You are starting at a number and doing a tree traversal. This rapidly leads to a very large search space. A quick run to calculate the number of iterations for the correct answer results in about 500 steps. This shouldn't be an issue for recursion with a small stack frame.

While knowing the recursive solution is not a bad thing, one should also realize that many times the iterative solution is better. A number of ways of approaching converting a recursive algorithm to an iterative one can be seen on Stack Overflow at Way to go from recursion to iteration.

Best Answer

Actually you should break the function down first:

A loop has a few parts:

the header, and processing before the loop. May declare some new variables

the condition, when to stop the loop.

the actual loop body. It changes some of the header's variables and/or the parameters passed in.

the tail; what happens after the loop and return result.

Or to write it out:

Using these blocks to make a recursive call is pretty straightforward:

Et voilà; a tail recursive version of any loop.

breaks andcontinues in the loop body will still have to be replaced withreturn tailand returnfoo_recursion(params, modified_header_vars)as needed but that is simple enough.Going the other way is more complicated; in part because there can be multiple recursive calls. This means that each time we pop a stack frame there can be multiple places where we need to continue. Also there may be variables that we need to save across the recursive call and the original parameters of the call.

We can use a switch to work around that: