The code in the question (a linear search), as you rightly point out, is going to get slow for large float arrays. Technically it's O(n) where n is the number of float values in your array.

In general, the best that you can do to find a value in an ordered array is a recursive tree search of some kind (e.g. binary search), in which case you can achieve an O(log n) lookup time in the number of elements in your array. O(log n) is much better than O(n) for large values of n.

My suggested approach would therefore be a simple binary search of the array, i.e.:

- Set min/max integer indexes to cover your whole float array

- test the value in the middle of the range at index mid=(min+max/2) against the search value x

- if x is lower than this value, set max to mid, else set min to mid

- repeat (2-4) until you have found the correct value

This is an O(log n) algorithm which should be fast enough for nearly all situations. Intuitively, it works by halving the range to be searched at each step until you find the correct value.

It's really hard to beast the simple binary search, so if you have already implemented this correctly then you may be pretty close to optimal already. However, if you know the distributions of the data and/or have a limited range of lookup values (x), there are still some other more advanced tricks you can try:

- Bucketing - create buckets (e.g. for each interval between two integers), each of which contains a smaller sorted list of the float values between the two bounding integers plus two values immediately below and immediately above each range. You can then start your search at (trunc(x)+0.5). This should give you a good speedup if you choose appropriately sized buckets (it's effectively increasing the branching factor of the tree.....). If integers don't work for you, then you can try buckets of some other fixed-point precision (e.g. multiples of 1/16).

- Bit-mapping - if the range of possible lookup values is small enough, you could try creating a big lookup table indexed by the bitwise value of x. This will be O(1) but you may need a lot of memory which will be very unfriendly on your cache... so use with caution. This is especially nasty because you are looking up float values, so you may well need several GBs to account for all of the less significant bits......

- Rounding and hashing - hash tables are probably not the best data structure for this problem, but if you can survive losing a bit of accuracy they could work - simply round off the lowest bits of your lookup values and use a hashmap to directly look up the correct value. You will have to experiment on the right trade-off between hashmap size and precision, and also ensure that all possible hash values are populated so this can be a bit tricky......

- Tree-balancing - your ideal tree should have a 50% chance of going left or right. So if you create a tree based on the distribution of lookup values (x), then you can optimise the tree to produce answers with the minimal amount of tests. This is likely to be a good solution if a lot of values in your float array are very close together, since it will enable you to avoid searching these branches too often.

- Crit-bit trees - these are still trees (so still O(log n)...) but some cases: you would however need to convert your floats into some fixed-point format in order to make the comparisons work

However, unless you are in a very special situation I'd probably recommend sticking with the simple binary search. Reasons:

- it's much easier to implement

- it's very fast for most common cases

- the extra overhead of the more complex approaches (e.g. higher memory usage / cache pressure) often outweighs the minor theoretical gains

- it will be more robust to future changes in the data distributions....

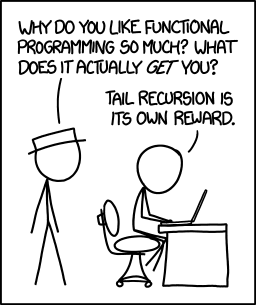

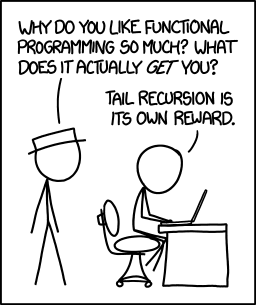

Tail call optimization is present in many languages and compilers. In this situation, the compiler recognizes a function of the form:

int foo(n) {

...

return bar(n);

}

Here, the language is able to recognize that the result being returned is the result from another function and change a function call with a new stack frame into a jump.

Realize that the classic factorial method:

int factorial(n) {

if(n == 0) return 1;

if(n == 1) return 1;

return n * factorial(n - 1);

}

is not tail call optimizatable because of the inspection necessary on the return. (Example source code and compiled output)

To make this tail call optimizeable,

int _fact(int n, int acc) {

if(n == 1) return acc;

return _fact(n - 1, acc * n);

}

int factorial(int n) {

if(n == 0) return 1;

return _fact(n, 1);

}

Compiling this code with gcc -O2 -S fact.c (the -O2 is necessary to enable the optimization in the compiler, but with more optimizations of -O3 it gets hard for a human to read...)

_fact(int, int):

cmpl $1, %edi

movl %esi, %eax

je .L2

.L3:

imull %edi, %eax

subl $1, %edi

cmpl $1, %edi

jne .L3

.L2:

rep ret

(Example source code and compiled output)

One can see in segment .L3, the jne rather than a call (which does a subroutine call with a new stack frame).

Please note this was done with C. Tail call optimization in Java is hard and depends on the JVM implementation (that said, I haven't seen any that do it, because it is hard and implications of the required Java security model requiring stack frames - which is what TCO avoids) -- tail-recursion + java and tail-recursion + optimization are good tag sets to browse. You may find other JVM languages are able to optimize tail recursion better (try clojure (which requires the recur to tail call optimize), or scala).

That said,

There is a certain joy in knowing that you wrote something right - in the ideal way that it can be done.

And now, I'm going to get some scotch and put on some German electronica...

To the general question of "methods to avoid a stack overflow in a recursive algorithm"...

Another approach is to include a recursion counter. This is more for detecting infinite loops caused by situations beyond one's control (and poor coding).

The recursion counter takes the form of

int foo(arg, counter) {

if(counter > RECURSION_MAX) { return -1; }

...

return foo(arg, counter + 1);

}

Each time you make a call, you increment the counter. If the counter gets too big, you error out (in here, just a return of -1, though in other languages you may prefer to throw an exception). The idea is to prevent worse things from happening (out of memory errors) when doing a recursion that is much deeper than expected and likely an infinite loop.

In theory, you shouldn't need this. In practice, I've seen poorly written code that has hit this because of a plethora of small errors and bad coding practices (multithreaded concurrency issues where something changes something outside the method that makes another thread go into an infinite loop of recursive calls).

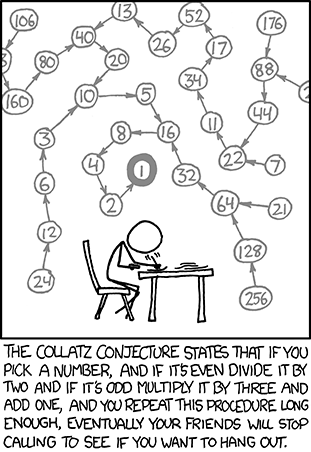

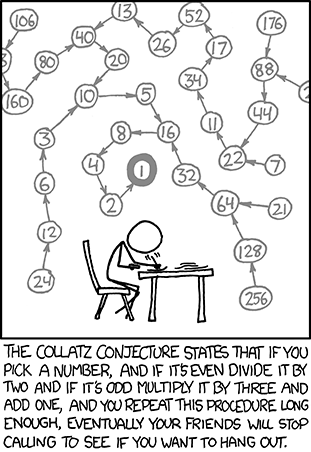

Use the right algorithm and solve the right problem. Specifically for the Collatz Conjecture, it appears that you are trying to solve it in the xkcd way:

You are starting at a number and doing a tree traversal. This rapidly leads to a very large search space. A quick run to calculate the number of iterations for the correct answer results in about 500 steps. This shouldn't be an issue for recursion with a small stack frame.

While knowing the recursive solution is not a bad thing, one should also realize that many times the iterative solution is better. A number of ways of approaching converting a recursive algorithm to an iterative one can be seen on Stack Overflow at Way to go from recursion to iteration.

Best Answer

If your language processor (compiler or interpreter) properly implements tail recursion optimization, then there winds up being no difference between a properly-coded tail-recursive binary search and an iterative binary search. The language processor will turn the recursive calls into simple loops.

At that point, choice of recursive vs. iterative formulation is pretty much a matter of personal and local preference. Some people find recursive code easier to understand. Some people are scared to death of recursion, or don't understand it, or have no clue about tail recursion optimization, and want explicitly iterative code everywhere.